Soham PetkarI am a Research Fellow at Sarvam AI, working on foundation models. My past research focuses on efficient pre-training and evaluation of graph-language multimodal models, alongside exploring interpretability and alignment in real-world scenarios using the linear representation hypothesis. Topics that intrigue me include mechanistic interpretability, alignment, graph-language modeling, deterministic & stochastic reasoning mechanisms, or anything that imparts tacit knowledge to models. Email / GitHub / Google Scholar / ORCID / LinkedIn / X / LessWrong |

|

|

My research focuses on foundation models, graph-language multimodal models, interpretability, and alignment.

|

|

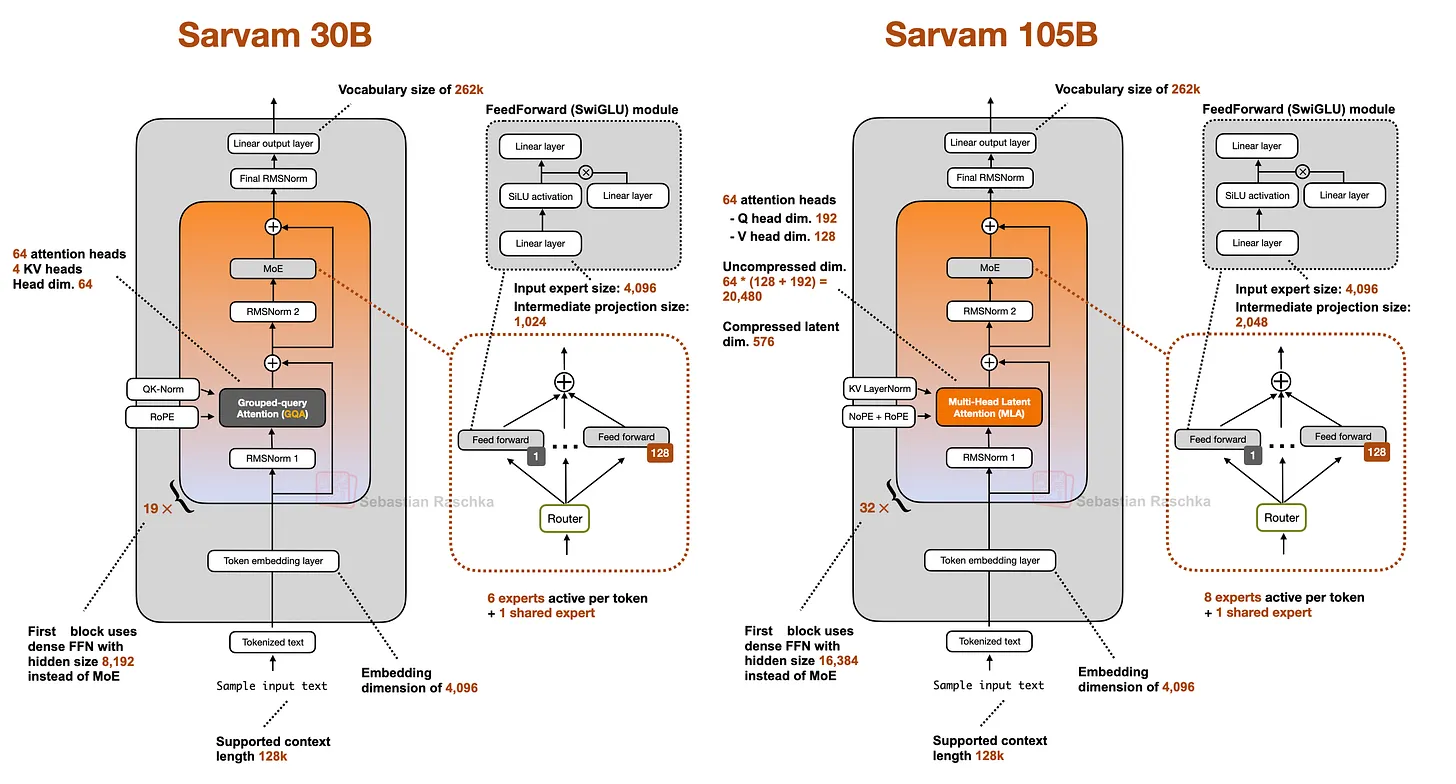

Sarvam 30B and 105B: Open-Source Reasoning LLMs Trained from Scratch in IndiaSoham Petkar and 14 other researchers at Sarvam AI Sarvam AI, 2026 report / model (30B) / model (105B) / Sarvam 30B and 105B are Mixture-of-Experts reasoning models trained from scratch on large-scale, high-quality datasets. Both achieve state-of-the-art results on Indian language benchmarks and are competitive with frontier models on reasoning, coding, and agentic tasks. Open-sourced under Apache 2.0. |

|

A Graph Talks, But Who's Listening? Rethinking Evaluations for Graph-Language ModelsSoham Petkar*, Hari Aakash K*, Anirudh Vempati, Akshit Sinha, Ponnurangam Kumaraguru, Chirag Agarwal ACL 2026 and NeurIPS NPGML Workshop, 2025 arxiv / paper / code / We present a comprehensive rethinking of evaluation methodologies for graph-language models, proposing new benchmarks and evaluation strategies that better capture the true capabilities of these multimodal systems. |

|

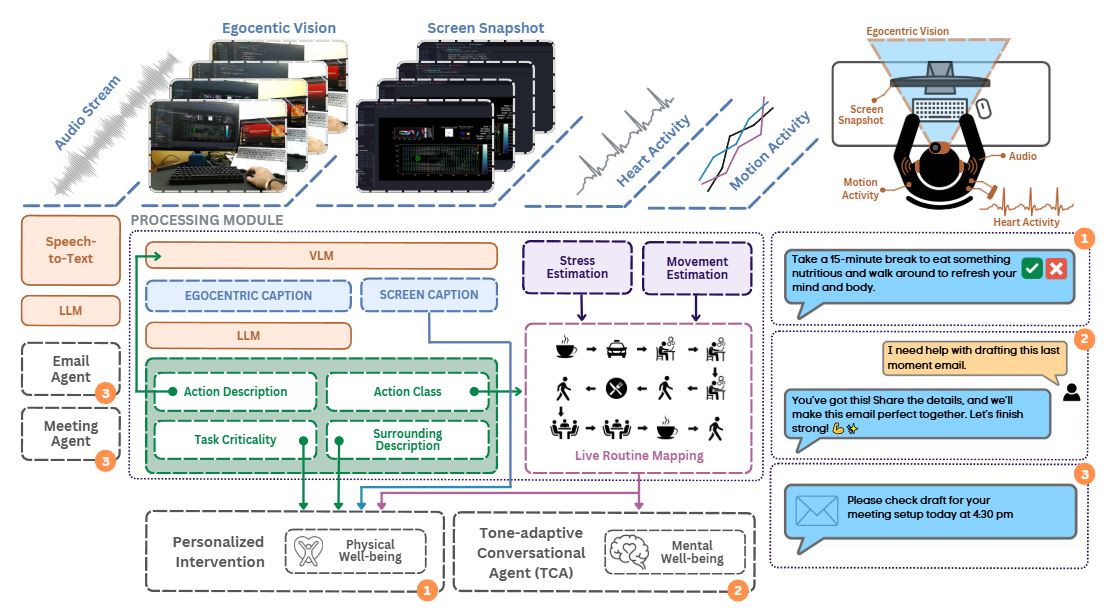

AdaptAI: A Personalized Solution to Sense Your Stress, Fix Your Mess, and Boost ProductivitySoham Petkar*, Rushiraj Gadhvi*, Priyansh Desai*, Sidhharth et al. CHI LBW, 2025 arxiv / paper / AdaptAI is a multimodal AI system that enhances productivity and well-being by tailoring interventions to individual needs, integrating egocentric vision, audio, physiological signals, and LLM-driven workflows. |

|

A selection of my projects spanning deep learning, computer vision, quantum ML, and more. |

|

AdaptAI: A Personalized Solution to Sense Your Stress, Fix Your Mess, and Boost ProductivityAdaptAI is a multimodal AI system that enhances productivity and well-being by tailoring interventions to individual needs. Unlike generic tools, AdaptAI integrates egocentric vision, audio, physiological signals, and LLM-driven workflows to provide context-aware support. Its Tone-Adaptive Conversational Agent enhances productivity by adjusting responses to be more positive and supportive during stressful moments. code / |

|

KhalasiIO: Detecting Situational Impairments With Reasoning-based Memory BankDetects and addresses temporary impairments caused by environmental factors like noise, lighting, and stress. Integrates wearable devices and contextual memory for personalized, real-time interventions, reducing cognitive load and frustration. Promising results highlight scalability and improved accessibility. |

|

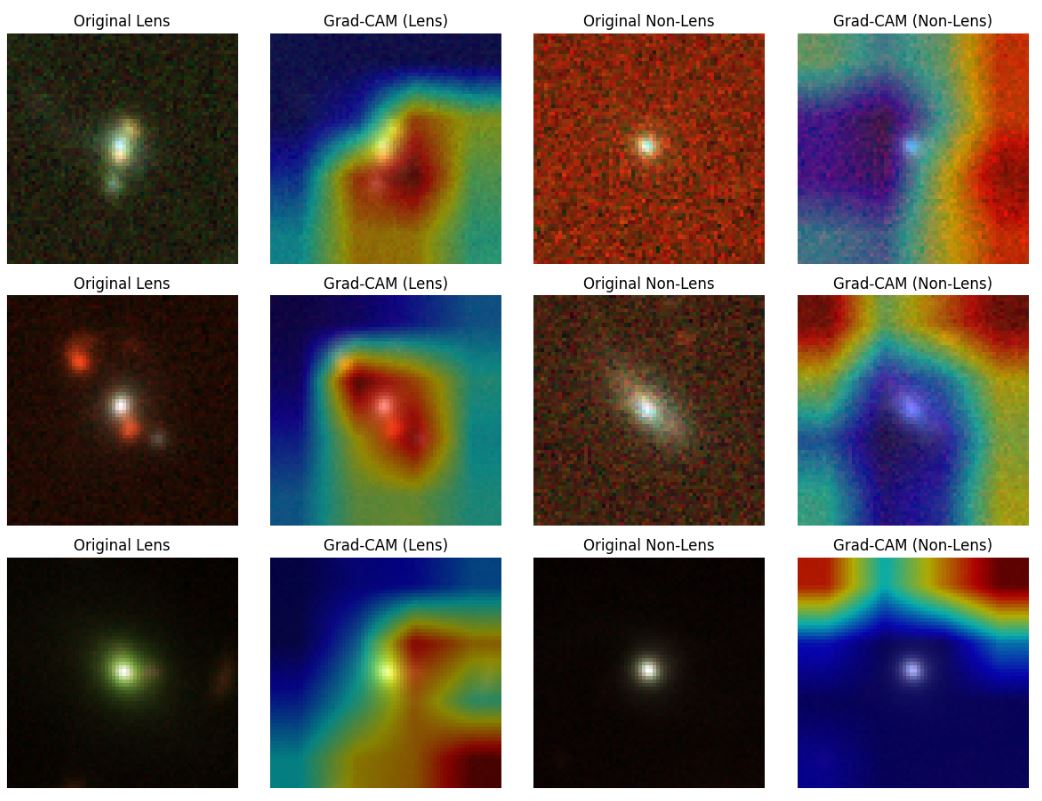

Strong Lensing Classification & Super-Resolution with Masked AutoencodersDeep learning methods for astrophysics: explored ResNet-18 and Masked Autoencoder (MAE) models for classifying strong gravitational lensing images and enhancing resolution with ESRGAN. Included interpretability experiments with Grad-CAM to analyze model decisions and identify biases. Achieved improved super-resolution with physics-informed insights. code / |

|

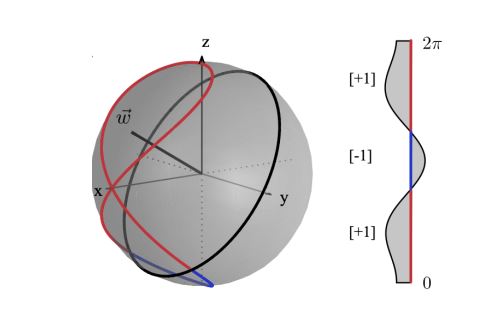

Latent Space Collapse? Understanding the Effects of Narrow Fine-Tuning on LLMsExplored how narrow fine-tuning reshapes GPT-2's internal representations in a sentiment classification task. Using TransformerLens, we analyzed shifts in activation and embedding spaces, sentiment direction norms, and causal mediation experiments. Key finding: fine-tuning induces 'output squishing,' reducing diversity and potentially harming generalization. code / paper / |

|

RAG Optimizations with Reinforcement LearningA novel reinforcement learning-based optimization strategy to improve Retrieval-Augmented Generation (RAG) performance. Evaluates retriever performance across diverse conversational datasets using text analysis metrics, various retrieval techniques, and RL policies such as DQN, PPO, and Multi-Armed Bandits to reduce irrelevant content and improve response quality. |

|

Quantum Machine Learning ClassifiersImplemented 24 combinations of Feature Maps, Ansatzs, and Optimizers to evaluate the efficacy of quantum kernel-based classifiers. Our best combination — Z-Feature map, EfficientSU2 ansatz, and L_BFGS_B optimizer — outperformed classical SVM across all metrics. code / |

|

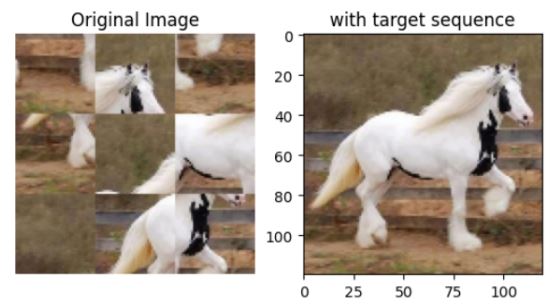

Visual Embeddings Solving Jigsaw PuzzlesA deep learning model using Graph Neural Networks and Autoencoders for representation learning via jigsaw puzzle paradigms. Introduced a Segmented Flow warp approach, achieving 60% validation accuracy, competing with SOTA methods. Original idea was to devise an efficient pre-training like solving jigsaw, to improve downstream performance in the wild for visual tasks. code / video / paper / |

|

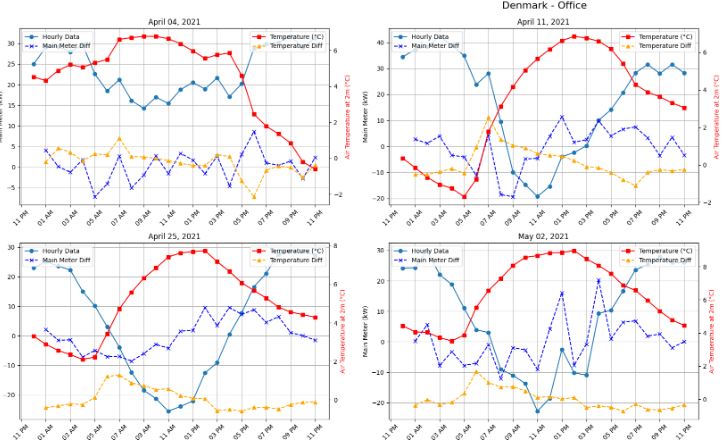

ADRENALIN Challenge: Non-Intrusive Load Disaggregation (NILM)Developed an unsupervised algorithm for predicting heating and cooling loads from main meter readings. Ranked 8th worldwide in the ADRENALIN challenge. code / |

|

WeCare: Winner HackPlaksha 24' Healthcare TrackDeveloped WeCare, a desktop app to monitor slouching and blinking using body keypoint detection via Strided Transformer weights. Alerts users to improve posture and reduce eye strain. website / |

|

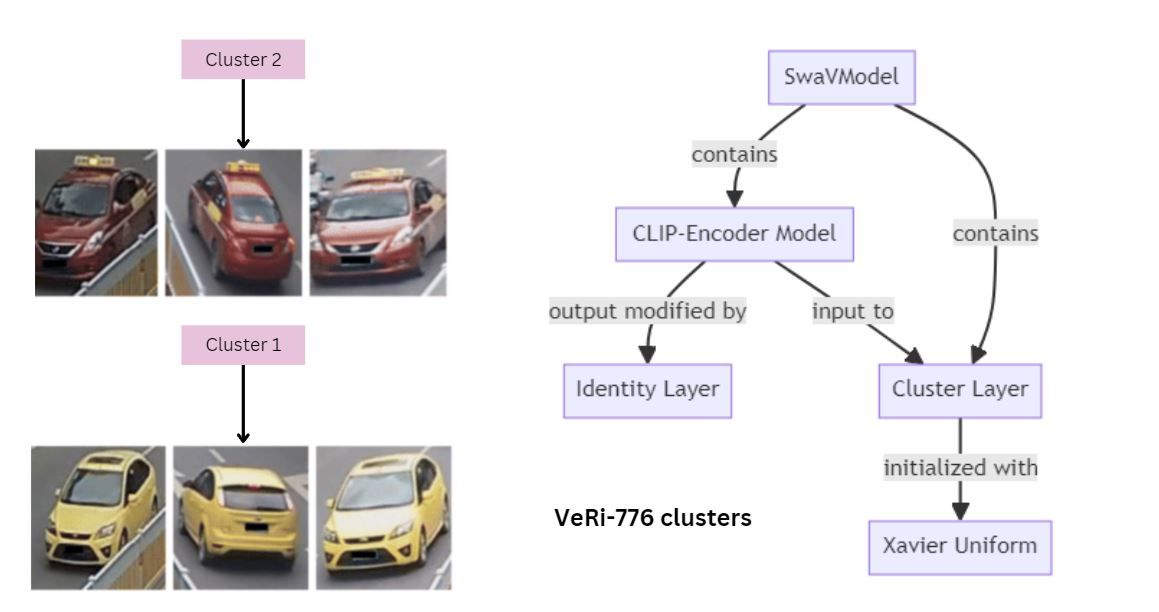

SWaV-Based Clustering for Vehicle RE-IdentificationApplied SWaV (Swapping Assignments between Views) clustering to perform vehicle re-identification on the Veri-776 dataset, assigning consistent clusters to the same vehicle across images to improve identification accuracy. |

|

I write about AI, interpretability, and related topics on LessWrong. Below are some of my posts. |

|

June 29, 2024

Exploring how narrow fine-tuning reshapes GPT-2's internal representations, analyzing shifts in activation and embedding spaces, and discovering that fine-tuning induces 'output squishing' that reduces diversity and potentially harms generalization.

Read on LessWrong →

|

|

Design and source code from Jon Barron's website |